When to Call Me

I work at the layer where human interpretation determines whether products succeed. Teams bring me in when:

You’re building AI features and can’t tell whether they’re actually helping.

The model performs well, but users hesitate, override, or disengage. I surface the gap between technical accuracy and human confidence and translate that into design and product direction.

Adoption is slower than expected.

Usage metrics exist, but trust has not formed. I identify where clarity breaks down, where expectations misalign, and what is preventing users from relying on the system.

Your product has multiple stakeholders and competing realities.

Workflow-heavy systems often assume how people work instead of observing how they navigate constraints. I map lived behavior and surface friction points that stall momentum.

You've done the research, but it didn't make it into the product.

The interviews happened. The synthesis was solid. But somewhere between the readout and the next sprint, the nuance was lost. I help teams structure research outputs so they survive the translation into product decisions; and stay accessible as the product evolves.

You’re navigating AI product decisions and want a thinking partner.

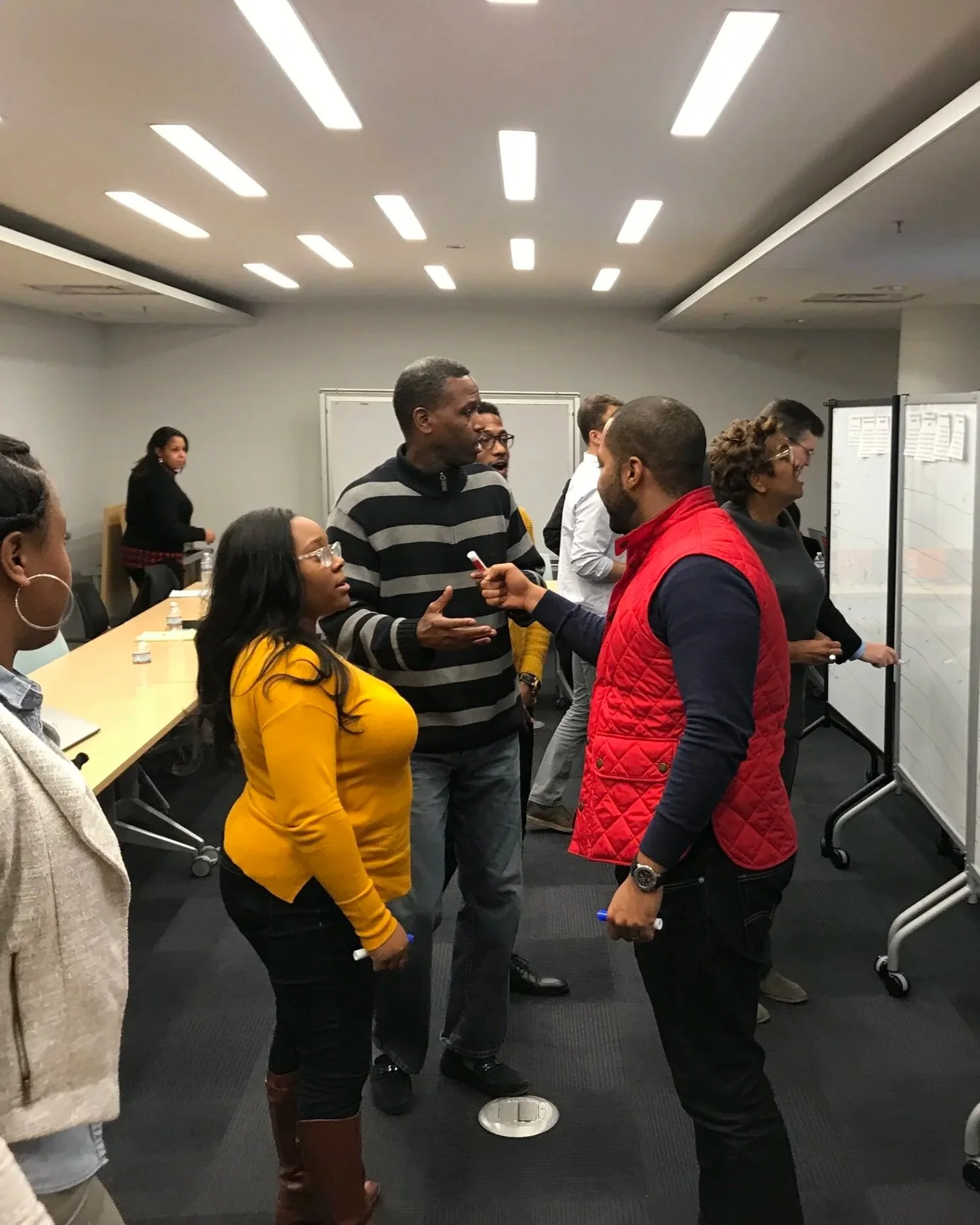

Sometimes you need a quick diagnostic, a sounding board, or a room full of peers who understand what you’re working through. The Trust & Adoption Audit gives you a clear-eyed read in one week. The AI Adoption Circle gives you ongoing strategic support alongside people doing similar work.

My work connects qualitative depth to roadmap decisions, especially in AI-enabled, regulated, and high-stakes environments where trust drives adoption.